First-Order Softmax Weighted Switching Gradient Method for Distributed Stochastic Minimax Optimization with Stochastic Constraints

Abstract

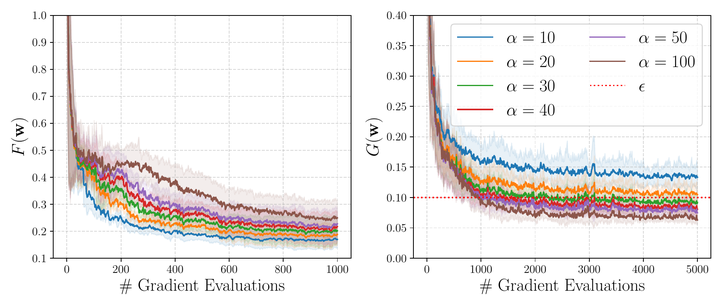

This paper addresses the distributed stochastic minimax optimization problem subject to stochastic constraints. We propose a novel first-order Softmax-Weighted Switching Gradient method tailored for federated learning. Under full client participation, our algorithm achieves the standard oracle complexity to satisfy a unified bound for both the optimality gap and feasibility tolerance. We extend our theoretical analysis to the practical partial participation regime by quantifying client sampling noise through a stochastic superiority assumption. Furthermore, by relaxing standard boundedness assumptions on the objective functions, we establish a strictly tighter lower bound for the softmax hyperparameter. We provide a unified error decomposition and establish a sharp high-probability convergence guarantee. Ultimately, our framework demonstrates that a single-loop primal-only switching mechanism provides a stable alternative for optimizing worst-case client performance, effectively bypassing the hyperparameter sensitivity and convergence oscillations often encountered in traditional primal-dual or penalty-based approaches. We verify the efficacy of our algorithm via experiment on the Neyman-Pearson (NP) classification and fair classification tasks.