Characterizing Evolution in Expectation-Maximization Estimates for Overspecified Mixed Linear Regression

Abstract

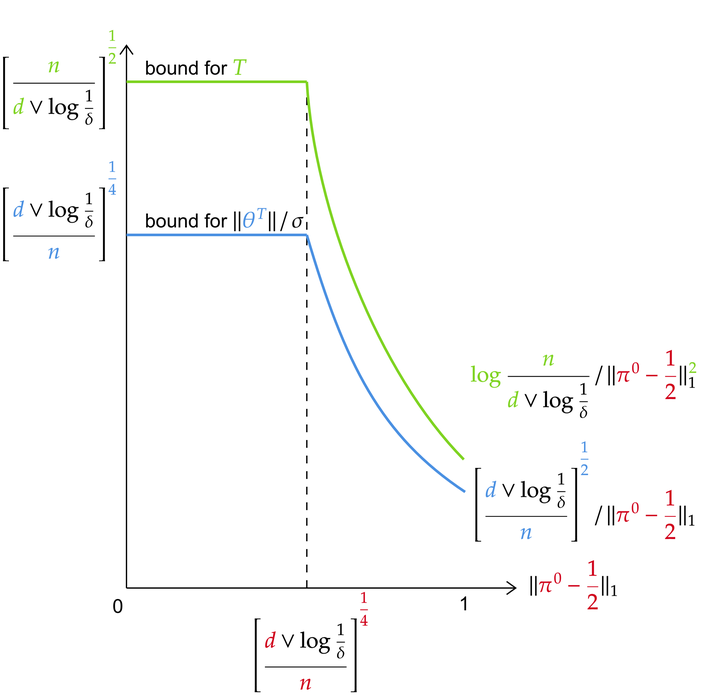

Estimating data distributions using parametric families is crucial in many learning setups, serving both as a standalone problem and an intermediate objective for downstream tasks. Mixture models, in particular, have attracted significant attention due to their practical effectiveness and comprehensive theoretical foundations. A persisting challenge is model misspecification, which occurs when the model to be fitted has more mixture components than those in the data distribution. In this paper, we develop a theoretical understanding of the Expectation-Maximization (EM) algorithm’s behavior in the context of targeted model misspecification for overspecified two-component Mixed Linear Regression (2MLR) with unknown regression parameters and mixing weights. We rigorously characterize the evolution of EM estimates for both regression parameters and mixing weights of overspecified MLR models by providing approximate dynamic equations for EM update rules and establishing convergence guarantees for final accuracy, time complexity, and sample complexity at population and finite-sample levels, respectively. We further extend our analysis in the overspecified setting to the finite low SNR regime, providing approximate dynamic equations that characterize the EM algorithm’s behavior in this challenging case. Our new findings not only expand the scope of theoretical convergence but also improve the bounds for statistical error, time complexity, and sample complexity, and rigorously characterize the evolution of EM estimates.